Building a DynamoDB conformance suite

When I started building Dynoxide - a DynamoDB-compatible engine in Rust - I kept running into the same problem. I'd implement an operation, write tests against my understanding of DynamoDB's behaviour, and ship it. Then someone (usually me) would discover that real DynamoDB does something slightly different. A validation error with a different message. A condition expression that fails where I expected it to succeed. An edge case in number precision that I'd never considered.

Every emulator ships with "DynamoDB compatible" and you're expected to take their word for it. I needed a way to answer a simple question: does Dynoxide actually behave like DynamoDB?

No public conformance suite exists for DynamoDB - not from AWS, not from the community.

So I built one.

The idea

The principle is straightforward. Write a test. Run it against real DynamoDB. Record the result. That recorded result becomes the ground truth. Then run the same test against an emulator and compare.

If the emulator's response matches DynamoDB's, the test passes. If it doesn't, the emulator has a conformance gap. No opinions, no guesswork about what the documentation means - just "does this behave the same way the real thing does?"

I built it as a standalone project - dynamodb-conformance - so anyone can clone it, point it at their own DynamoDB-compatible endpoint, and get results. It uses the AWS SDK v3 for TypeScript and vitest as the test runner. Nothing exotic.

Tiers

Not all DynamoDB operations are equally important. If your emulator can't do a basic PutItem, nothing else matters. If it doesn't perfectly replicate the error message format for an obscure validation edge case, that's less critical.

So the suite is split into three tiers.

Tier 1 covers core CRUD operations - CreateTable, PutItem, GetItem, UpdateItem, DeleteItem, Query, Scan, BatchGetItem, BatchWriteItem. These are the operations every application uses. If an emulator fails here, it's not usable for real development.

Tier 2 covers advanced features - transactions, PartiQL, streams, tags, TTL, and table updates like billing mode changes. These are features that production applications rely on but that you might not hit in a simple prototype.

Tier 3 covers edge cases and error fidelity - validation ordering, exact error message formatting, API limits, and legacy API behaviour. The things that only matter when you need your emulator to be indistinguishable from the real service.

This tiered structure means a result like "100% Tier 1, 95% Tier 2, 80% Tier 3" actually tells you something useful. It means core operations work perfectly, advanced features are mostly there, and edge cases have some gaps. A flat "92% overall" hides whether the failures are in PutItem or in obscure validation corner cases.

Ground truth

The key design decision was making DynamoDB itself the source of truth rather than my interpretation of the documentation.

Every test in the suite has been run against a real DynamoDB table in eu-west-2. The expected values in the tests aren't what I think DynamoDB should return based on reading the docs - they're what DynamoDB actually returns. This matters more than you'd expect. DynamoDB's documentation is good, but it doesn't cover every edge case. Sometimes the only way to know what DynamoDB does is to try it and see.

A scheduled CI job runs the full suite against real DynamoDB weekly, so if AWS changes something - a new validation, a different error message, a behavioural tweak - the ground truth stays current. The suite can't go stale the way a set of hand-written expected values would.

Results

Here's where it gets interesting.

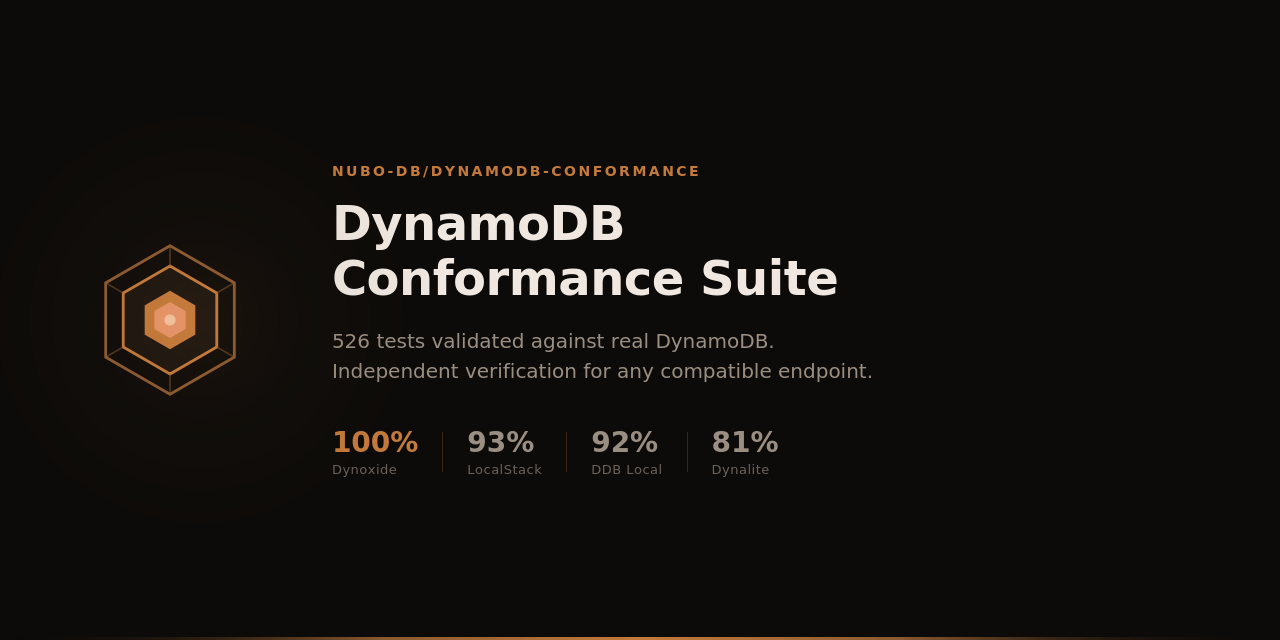

| Target | Tier 1 (267) | Tier 2 (93) | Tier 3 (166) | Overall |

|---|---|---|---|---|

| DynamoDB (eu-west-2) | 100% | 100% | 100% | 526 / 526 |

| Dynoxide | 100% | 100% | 100% | 526 / 526 |

| LocalStack | 98.9% | 95.7% | 81.9% | 489 / 526 |

| DynamoDB Local | 98.9% | 90.3% | 81.9% | 484 / 526 |

| Dynalite | 98.1% | 10.8% | 92.8% | 426 / 526 |

Dynoxide passes every test. 526 out of 526, across all three tiers.

DynamoDB Local - AWS's own emulator - fails 42. LocalStack fails 37. Dynalite, which hasn't been actively maintained for a while, fails 57 (and that Tier 2 number of 10.8% tells you it's missing entire feature categories like transactions and PartiQL).

I want to be careful about what this means and what it doesn't.

526 tests is a lot, but DynamoDB is a massive service. There are aspects of it that the suite doesn't cover - DAX compatibility, on-demand capacity semantics, cross-region replication, fine-grained IAM condition keys. "100% conformance on 526 tests" is accurate. "100% DynamoDB compatible" is not, and I wouldn't claim it.

What the results do tell you is that for the operations and behaviours the suite covers - which include everything most applications use day to day - Dynoxide matches DynamoDB exactly. Not approximately. Exactly.

What DynamoDB Local gets wrong

Some of DynamoDB Local's 42 failures are genuinely surprising. This is AWS's own emulator. You'd expect it to be the gold standard for local DynamoDB development. In practice, it has real gaps across all three tiers.

Three of its Tier 1 failures are in ItemCollectionMetrics - it doesn't return them for PutItem, DeleteItem, or UpdateItem even when you ask for them with ReturnItemCollectionMetrics: SIZE. That's not an edge case. That's a documented feature of the API that silently does nothing.

In Tier 2, the entire tagging API is broken. All eight tag-related tests fail - TagResource, UntagResource, ListTagsOfResource. If you're writing infrastructure-as-code that tags tables and you're testing against DynamoDB Local, none of that is being validated. It also gets PartiQL INSERT semantics wrong - it treats INSERT as an upsert when real DynamoDB correctly rejects an INSERT on an existing item.

The bulk of the failures (30) are in Tier 3 - validation ordering and error message formatting. DynamoDB Local returns different errors than production for the same bad request. This might sound academic, but if you're writing error handling code against DynamoDB Local, it might not work the same way against the real thing. You'll get the right error type but the wrong message content, or the validations will fire in a different order.

These are the kind of differences you only discover when you have a conformance suite to catch them. Without one, they hide as production bugs that are impossible to reproduce locally.

Why bother?

I built this because I needed it for Dynoxide. I wanted confidence that the thing I was building actually worked the way DynamoDB works, not the way I assumed it works. But the suite is useful beyond that.

If you're choosing between DynamoDB emulators for your development workflow, you can run the suite yourself and compare. The numbers above aren't marketing claims - they're reproducible results from a public repository that anyone can verify.

If you maintain a DynamoDB emulator or compatibility layer, you can use it to find and fix gaps. Point it at your endpoint, see what fails, fix the failures. The tiered structure tells you which failures matter most.

And if you're using DynamoDB Local in your CI pipeline and wondering why a test passes locally but fails against real DynamoDB - the conformance suite might tell you why. The 42 things DynamoDB Local gets wrong are 42 potential sources of exactly that kind of bug.

526 tests is solid coverage, but there are DynamoDB behaviours the suite doesn't exercise yet. If you've hit a case where an emulator diverges from real DynamoDB and you think it should be covered, open a PR. The only requirement is that the test passes against real DynamoDB - include the results artifact from a run against your own AWS account and I'll verify it against the ground truth pipeline. More tests make the suite more useful for everyone, not just Dynoxide.

The suite is at github.com/nubo-db/dynamodb-conformance. Clone it, run it, check the results for yourself.